Getting Indexed and Ranked on Google

Article recap :

-

For a page to appear on Google's results, it goes through three steps : crawling, indexing, and ranking

-

To get a page indexed on Google you must : submit your URL, verify your robots.txt file directives and create a sitemap

-

To get a page ranked on Google you must : have links from important pages pointing to it, update your older content adding links to the new one, socially share your URL, Improve your search clicks and bring traffic to it

Indexing vs Ranking : What is the difference ?

Indexing means having a page present in the search engines' databases.

Ranking is about the position of a page on search engine result pages.

For a page to appear on Google's results, it goes through three steps: crawling, indexing, and ranking.

Crawling

The crawling of new pages and updated pages are done by Googlebot. Googlebot is Google's web crawling robot (also known as a bot or spider), a massive set of computers working with a search algorithm capable of checking billions of pages across the web.

The checking process begins with a list of URL pages generated from the previous processes and extended by the sitemaps provided by webmasters of the sites. As the Googlebot visits the sites, it finds the page links and places them in the list of pages to be crawled. This process is also applied to new websites, websites that had been updated and were already indexed on Google.

Indexing

After visiting the websites, Googlebot processes the pages and compiles them into a huge index (just like a library) with all the words and their location found on each page. In this part of the process, other information such as content tags (e.g the alt attribute of images) and HTML title tags are processed.

Ranking

As soon as a user does a research using one or a list of words, the search engines look in their page's index and deliver the ones related to the words used in the user's search. The results delivered are what the algorithm judges most relevant to the user. This relevance is determined by over 200 criteria. Practices such as spam links negatively affect results. The best types of links are those returned based on the quality of the content. For this reason, it is necessary to bet on quality content that is consistent with the rest of your site.

How to get indexed on Google :

1. Submit your URL

The first - and simplest - step you can take to have a page indexed is to submit its URL to Google. In other words, instead of waiting for Google, you can directly ask it to crawl and index your URL by using the URL Inspection tool on Search Console. By doing that, you put your URL in their priority crawl queue.

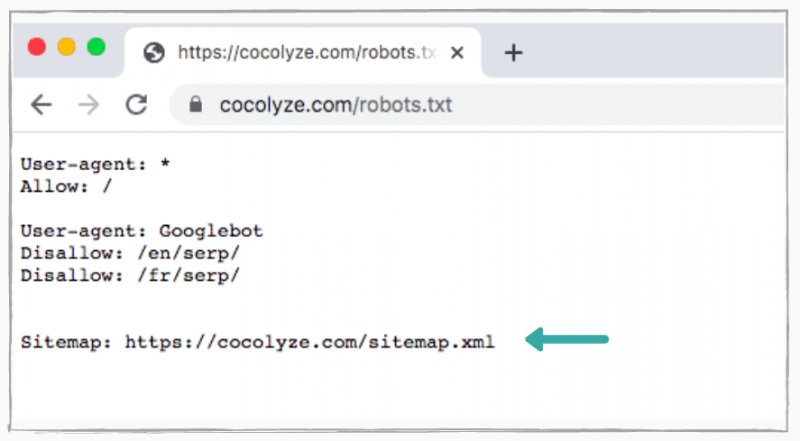

2. Check if you are not blocking Googlebot to crawl your page on your robots.txt file

A robots.txt file is an important tool where we can “tell” search engines if a URL can or cannot be crawled. To check if your page is not blocking Googlebot you have to go on yourpageurl.com/robots.txt and check if there is a “disallow” directive preventing your page from being crawled. If it is the case (see the examples below), you only need to remove them or add “allow” instead.

User-agent: Googlebot Disallow: /

or

User-agent: * Disallow: /

3. Create a Sitemap

Go back to the end of your robots.txt file and check if you have provided a sitemap of your website. A sitemap is a file or text document that contains a list of all the pages (URLs) of a site. It is developed to facilitate the process of indexing pages for search engines. It works just like of map that will help and guide search robots to navigate and find pages on the site.

There are some sites where the use of Sitemap is more recommended than others. Here you can find some examples of them :

- if the site has dynamic content ;

- if the site has pages that cannot be easily found by Googlebot during the crawl process, such as pages with AJAX content or images ;

- if the site is new and there are few links pointing to it. Since Googlebot crawls the web following links from one page to another if your site is not well linked, it will be difficult to detect it ;

- if the site has a large file with content pages that are not well linked to each other or that are simply not linked.

You can know more about the creation of sitemaps on Build and submit a sitemap on Google Search Central.

How to get ranked on Google

In the previous section, we have explained what you should do to get your content indexed faster. In this section, you will find some additional advice about both ranking and indexing since, in some cases, they can be directly correlated.

1. Having links from important pages

You just wrote brand new content and you want it to get indexed and ranked. An easy way to do this is to make sure that you have important pages from your website adding links to it. Important pages can be your homepage, your blog, or your resources pages, for example. By doing this, you tell Google that it needs to be crawled, putting it on the regular crawling queue. It also helps to improve your new content rank, since you gave some quality signals to help Google to determine how to rank it.

2. Go back to your older content

Now that you have important pages linking to your new content, you should also consider adding links in your old content pointing to the brand new content you just wrote. That is another quality signal that you give to Google that will help the indexing and ranking of your new content.

3. Use social media to share your new content

It may sound obvious, but sharing socially your content has a huge impact on your content ranking, especially if your followers are ready to share it too. It happens because this social activity gives to Google an external signal of the content’s credibility.

4. Improve your search clicks

Remember when we said you should share your content socially? Well you are going to do that, but instead of sharing the direct URL of the page, you should share a Google Search Result that has the keywords you are trying to rank for, that will lead the user to the same page but through a different path. By doing this, you will increase your click-through rate and help you rank for auto-suggest queries.

Example:

-

Direct URL : https://cocolyze.com/fr/blog/rediger-un-bon-article-de-blog/

-

Search Result URL : https://www.google.com/search?q=outil+redaction+cocolyze

5. Bring traffic to the URL

Despite some controversies about its effectiveness, it seems that Google is (re)considering (since it used to be an old criteria) the traffic of an URL for its ranking. The reason is pretty easy to understand, a URL with a good amount of traffic indicates that its content has a good value to users, and because of that, it should be ranked or ranked accordingly.